As Workplace AI Surges, Enterprises Turn to Monitoring Tools to Track, Control and Govern Employee AI Usage

New research highlights rising risks of “shadow AI,” data leakage, and policy violations as employees increasingly use tools like ChatGPT, Copilot, and Gemini

TORONTO, ONTARIO, CANADA, April 8, 2026 /EINPresswire.com/ -- The rapid adoption of generative AI tools such as ChatGPT, Microsoft Copilot, and Google Gemini is creating a new challenge for enterprises: how to monitor, analyze, and control how employees use AI in the workplace. While AI is driving productivity gains, it is also introducing unprecedented visibility gaps, data security risks, and compliance concerns. Organizations are now shifting focus from whether employees should use AI to how they are using it, and what risks it creates.

The Rise of “Shadow AI” in the Workplace

Employees are adopting AI tools faster than organizations can govern them. Recent industry findings show: Up to 77% of employees have entered sensitive company data into AI tools. Many employees use AI tools without IT approval or visibility (“shadow AI”) AI-related insider risks are often unintentional but highly damaging. In many cases, employees are simply trying to work faster, summarizing documents, generating code, or drafting emails, without realizing that they may be exposing confidential data to external AI systems.

A New Category: AI Usage Monitoring

This shift has led to the emergence of a new category: AI usage monitoring and governance

Unlike traditional employee monitoring, this approach focuses on:

-Tracking which AI tools employees access

-Analyzing how often and how they are used

-Monitoring what type of data is being shared with AI systems

-Detecting policy violations and risky behavior

AI usage monitoring provides organizations with critical visibility into one of the fastest growing blind spots in modern IT environments.

Why Monitoring AI Usage Is Now Critical

Generative AI introduces risks that traditional security tools were not designed to handle:

1. Data Leakage Through Prompts

Employees frequently paste sensitive data, customer information, financials, source code, into AI tools, potentially exposing it externally.

2. Unapproved AI Tool Usage

AI tools are easily accessible via browsers, allowing employees to bypass IT controls and use unauthorized platforms.

3. Intellectual Property Exposure

Proprietary business data shared with AI systems may be stored, processed, or reused outside organizational control.

4. Compliance and Regulatory Risks

Unmonitored AI usage can lead to violations of data protection laws such as GDPR, HIPAA, and CCPA.

How CurrentWare Helps Organizations Control AI Usage

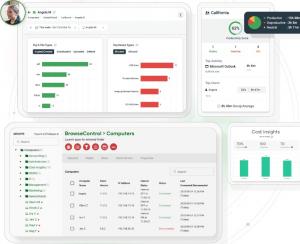

CurrentWare enables organizations to monitor, analyze, and enforce policies around employee AI usage, turning AI from a risk into a controlled asset. The financial impact of unmonitored AI usage is significant. While many tools focus only on visibility, CurrentWare combines monitoring, enforcement, and workforce analytics to deliver both control and measurable ROI. With solutions such as BrowseReporter and AccessPatrol, organizations can:

-Track AI tool usage across endpoints (ChatGPT, Copilot, Gemini, etc.)

-Detect shadow AI activity and unauthorized tool adoption

-Monitor interactions and usage patterns for policy compliance

-Prevent sensitive data transfers via AI tools

-Enforce acceptable AI usage policies across teams

By combining visibility with enforcement, CurrentWare helps organizations gain control over how AI is used, without blocking innovation. Global breach cost ~$4.4M–$4.88M. 40% breaches involve multi-environment systems. Operational disruption is a major cost driver

From AI Adoption to AI Governance

The reality is clear: employees are already using AI, often extensively, and often without oversight. The challenge for enterprises is no longer adoption, but governance. CurrentWare is recognized on G2 as a Top Employee Monitoring Software Based on Verified Customer Reviews.

"This software helps us to achieve compliance with industry and government requirements with respect to controlling the use of removable storage media. It fits the bill perfectly."

Matthew W., Project Manager

Aviation & Aerospace Industry, 11-50 employees

Balancing Innovation and Control

Forward looking organizations are taking a balanced approach:

-Enable AI for productivity gains

-Monitor usage for visibility and risk detection

-Enforce policies to prevent misuse

-Educate employees on responsible AI usage

This approach allows businesses to unlock AI’s value while minimizing its risks.

The Future: AI Accountability in the Workplace

As AI becomes embedded in everyday workflows, organizations will increasingly be judged not just on how they use AI, but how responsibly they manage it. AI usage monitoring is quickly becoming a foundational capability for:

-Security teams

-IT leadership

-Compliance and legal departments

-Executive decision-makers

The companies that succeed will be those that combine AI adoption with AI accountability. Organizations looking to monitor AI usage, reduce risk, and gain visibility into workforce behavior can explore CurrentWare’s workforce intelligence platform.

About CurrentWare

CurrentWare provides employee monitoring, data loss prevention, and workforce analytics solutions that help organizations improve productivity, reduce insider risk, and maintain compliance. Its platform enables businesses to gain visibility into employee activity, including AI usage, while supporting transparent and policy driven workplace practices.

Abhey Rana

CurrentWare

+91 90411 12299

email us here

Visit us on social media:

LinkedIn

Save and Monitor AI costs with CurrentWare

Legal Disclaimer:

EIN Presswire provides this news content "as is" without warranty of any kind. We do not accept any responsibility or liability for the accuracy, content, images, videos, licenses, completeness, legality, or reliability of the information contained in this article. If you have any complaints or copyright issues related to this article, kindly contact the author above.